When I use Flink to consume kafka in real time and write to hudi MOR table, the configuration of the response is as follows:

.option(FlinkOptions.READ_AS_STREAMING, true) .option(FlinkOptions.TABLE_TYPE, HoodieTableType.MERGE_ON_READ) // .option(FlinkOptions.INSERT_CLUSTER, true) .option(FlinkOptions.READ_STREAMING_CHECK_INTERVAL, 60) // .option(FlinkOptions.OPERATION, "insert") .option(FlinkOptions.BUCKET_ASSIGN_TASKS, taskParallelism) .option(FlinkOptions.WRITE_COMMIT_ACK_TIMEOUT, 20000L) .option(FlinkOptions.WRITE_PARQUET_MAX_FILE_SIZE, 128) .option(FlinkOptions.COMPACTION_MAX_MEMORY, 512) .option(FlinkOptions.WRITE_MERGE_MAX_MEMORY, 1024) .option(FlinkOptions.WRITE_TASK_MAX_SIZE, 2048D) .option(FlinkOptions.COMPACTION_MAX_MEMORY, 1024) .option(FlinkOptions.RETRY_INTERVAL_MS, 5000L) .option(FlinkOptions.RETRY_TIMES, 5) .option(FlinkOptions.WRITE_TASKS, 3 * taskParallelism) .option(FlinkOptions.READ_TASKS,4) .option(FlinkOptions.COMPACTION_TASKS, taskParallelism) .option(FlinkOptions.METADATA_ENABLED, false) .option(FlinkOptions.WRITE_RATE_LIMIT, 5000) //写入速率限制开启,防止流量抖动、写入流畅 .option(FlinkOptions.COMPACTION_ASYNC_ENABLED, true) .option(FlinkOptions.COMPACTION_DELTA_COMMITS, "5") .option(FlinkOptions.COMPACTION_TRIGGER_STRATEGY, "num_commits") .option(FlinkOptions.COMPACTION_TIMEOUT_SECONDS, 5 * 60 * 60) .option(FlinkOptions.HIVE_SYNC_DB, sinkDbName) .option(FlinkOptions.HIVE_SYNC_TABLE, sinkTableName) .option(FlinkOptions.HIVE_SYNC_ENABLED, true) .option(FlinkOptions.HIVE_SYNC_MODE, "HMS") .option(FlinkOptions.HIVE_SYNC_METASTORE_URIS, "thrift://hdp05:9083") .option(FlinkOptions.HIVE_SYNC_JDBC_URL, "jdbc:hive2://hdp05:10000") .option(FlinkOptions.HIVE_SYNC_SKIP_RO_SUFFIX, true)

Expected behavior

A clear and concise description of what you expected to happen.

Environment Description

-

Hudi version : 0.11.0

-

Spark version : spark3.2.1

-

Hive version :2.3.7

-

Hadoop version :2.7.3

-

Storage (HDFS/S3/GCS..) :HDFS

-

Running on Docker? (yes/no) :no

Additional context

Add any other context about the problem here.

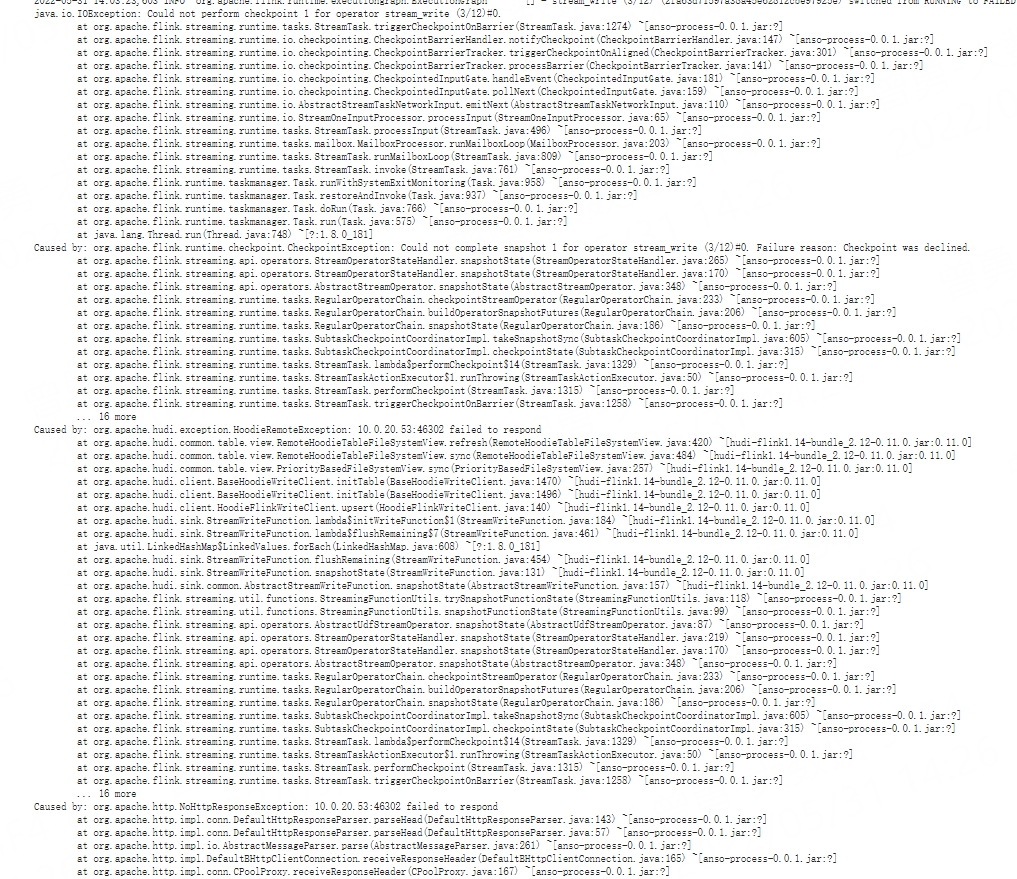

Stacktrace

Add the stacktrace of the error.

When I use Flink to consume kafka in real time and write to hudi MOR table, the configuration of the response is as follows:

.option(FlinkOptions.READ_AS_STREAMING, true) .option(FlinkOptions.TABLE_TYPE, HoodieTableType.MERGE_ON_READ) // .option(FlinkOptions.INSERT_CLUSTER, true) .option(FlinkOptions.READ_STREAMING_CHECK_INTERVAL, 60) // .option(FlinkOptions.OPERATION, "insert") .option(FlinkOptions.BUCKET_ASSIGN_TASKS, taskParallelism) .option(FlinkOptions.WRITE_COMMIT_ACK_TIMEOUT, 20000L) .option(FlinkOptions.WRITE_PARQUET_MAX_FILE_SIZE, 128) .option(FlinkOptions.COMPACTION_MAX_MEMORY, 512) .option(FlinkOptions.WRITE_MERGE_MAX_MEMORY, 1024) .option(FlinkOptions.WRITE_TASK_MAX_SIZE, 2048D) .option(FlinkOptions.COMPACTION_MAX_MEMORY, 1024) .option(FlinkOptions.RETRY_INTERVAL_MS, 5000L) .option(FlinkOptions.RETRY_TIMES, 5) .option(FlinkOptions.WRITE_TASKS, 3 * taskParallelism) .option(FlinkOptions.READ_TASKS,4) .option(FlinkOptions.COMPACTION_TASKS, taskParallelism) .option(FlinkOptions.METADATA_ENABLED, false) .option(FlinkOptions.WRITE_RATE_LIMIT, 5000) //写入速率限制开启,防止流量抖动、写入流畅 .option(FlinkOptions.COMPACTION_ASYNC_ENABLED, true) .option(FlinkOptions.COMPACTION_DELTA_COMMITS, "5") .option(FlinkOptions.COMPACTION_TRIGGER_STRATEGY, "num_commits") .option(FlinkOptions.COMPACTION_TIMEOUT_SECONDS, 5 * 60 * 60) .option(FlinkOptions.HIVE_SYNC_DB, sinkDbName) .option(FlinkOptions.HIVE_SYNC_TABLE, sinkTableName) .option(FlinkOptions.HIVE_SYNC_ENABLED, true) .option(FlinkOptions.HIVE_SYNC_MODE, "HMS") .option(FlinkOptions.HIVE_SYNC_METASTORE_URIS, "thrift://hdp05:9083") .option(FlinkOptions.HIVE_SYNC_JDBC_URL, "jdbc:hive2://hdp05:10000") .option(FlinkOptions.HIVE_SYNC_SKIP_RO_SUFFIX, true)Expected behavior

A clear and concise description of what you expected to happen.

Environment Description

Hudi version : 0.11.0

Spark version : spark3.2.1

Hive version :2.3.7

Hadoop version :2.7.3

Storage (HDFS/S3/GCS..) :HDFS

Running on Docker? (yes/no) :no

Additional context

Add any other context about the problem here.

Stacktrace

Add the stacktrace of the error.