Conversation

|

Thanks for submitting this pull request! The maintainers of this repository would appreciate if you could update the CHANGELOG.md based on your changes. |

|

This PR looks great, thanks for the contribution! Several initial considerations:

|

|

@theNded Thanks for the feedback. 1.) Yes I can totally do it, although I more in favor of atomic PR, so I would probably happen in the next PR I will clean up what I've done, add some experiments on the tutorials showing the use case of robust kernels, and move forward. If you have any other concerns respect to the design It would be awesome to know before I open the cleaned version of these changes. Thanks! |

|

@nachovizzo Thanks and looking forward to the PR! |

This is not of any help. And just add extra-dead code. If in the future we really want it, we just need to revert this specific commit

|

@theNded this PR is ready to review. I did not add any sort of benchmark right now because I ran out of time. Even so, the I hope this contribution is helpful for all the Open3D users! |

theNded

left a comment

theNded

left a comment

There was a problem hiding this comment.

Reviewed 5 of 11 files at r1, 1 of 2 files at r2, 7 of 7 files at r3, 1 of 1 files at r4.

Reviewable status: all files reviewed, 4 unresolved discussions (waiting on @nachovizzo and @yxlao)

cpp/open3d/pipelines/registration/RobustKernel.cpp, line 39 at r4 (raw file):

namespace registration { double L2Loss::Weight(double /*residual*/) const { return 1.0; }

Overall I'm thinking about making Weights inline member functions, since they are mostly one-liners.

cpp/open3d/pipelines/registration/RobustKernel.cpp, line 46 at r4 (raw file):

double HuberLoss::Weight(double residual) const { const double e = std::abs(residual);

return k_ / std::max(e, k_);

cpp/open3d/pipelines/registration/RobustKernel.cpp, line 54 at r4 (raw file):

double CauchyLoss::Weight(double residual) const { return 1.0 / (1 + std::pow(residual / k_, 2.0));

Would you consider writing a short inline function/Macro square(x) for these weights? std::pow is slower than x*x. It is a micro optimization though, you may ignore it.

cpp/open3d/pipelines/registration/RobustKernel.cpp, line 63 at r4 (raw file):

double TukeyLoss::Weight(double residual) const { const double e = std::abs(residual); if (e > k_) {

return std::pow((1.0 - std::pow(std::min(1, e / k_), 2.0)), 2.0);

|

Thanks for the great work! I added some suggestions for minor changes.

|

nachovizzo

left a comment

nachovizzo

left a comment

There was a problem hiding this comment.

Reviewable status: all files reviewed, 4 unresolved discussions (waiting on @theNded and @yxlao)

cpp/open3d/pipelines/registration/RobustKernel.cpp, line 39 at r4 (raw file):

Previously, theNded (Wei Dong) wrote…

Overall I'm thinking about making

Weights inline member functions, since they are mostly one-liners.

I totally agree. Even so, the fact that C++ does not have proper support for polymorphism without virtual disable the compiler to inline these methods. I spent a few hours, there are 3/4 cppcon talks trying to address this problem. Most of them end up writing a header-only library that it's usually only supported in c++17. With this I want to say, I didn't find any "elegant" solution for this. I didn't want to use pointers, nor smartpointers, I don't see why we need to allocate one RobustKernel object in the heap... the problem is that it's the only way to make this polymorphic. For the time being, I didn't detect any real overhead.

Here are 2 examples to investigate further, even the trivial L2Loss::Weight function can not be inlined by the compiler(due to memory allocations in the heap and so on...)

- Polymorphic example: https://godbolt.org/z/1cahrd

- Fixed kernel example: https://godbolt.org/z/5Eofr7, observe how in this case the loss function is trivially inlied by the compiler and further optimized. I can add

inlineto the declarations, but it makes no real difference.

PS: I''m open to suggestions if you find a better way to implement this strategy pattern

cpp/open3d/pipelines/registration/RobustKernel.cpp, line 46 at r4 (raw file):

Previously, theNded (Wei Dong) wrote…

return k_ / std::max(e, k_);

Great catch!

cpp/open3d/pipelines/registration/RobustKernel.cpp, line 54 at r4 (raw file):

Previously, theNded (Wei Dong) wrote…

Would you consider writing a short inline function/Macro square(x) for these weights? std::pow is slower than x*x. It is a micro optimization though, you may ignore it.

Done

cpp/open3d/pipelines/registration/RobustKernel.cpp, line 63 at r4 (raw file):

Previously, theNded (Wei Dong) wrote…

return std::pow((1.0 - std::pow(std::min(1, e / k_), 2.0)), 2.0);

Got it, much better

Address comments from PR#2425

Should we attempt to address this or in other PR? |

nachovizzo

left a comment

nachovizzo

left a comment

There was a problem hiding this comment.

Reviewable status: 13 of 14 files reviewed, 4 unresolved discussions (waiting on @theNded and @yxlao)

cpp/open3d/pipelines/registration/RobustKernel.cpp, line 39 at r4 (raw file):

Previously, nachovizzo (Ignacio Vizzo) wrote…

I totally agree. Even so, the fact that

C++does not have proper support for polymorphism without virtual disable the compiler to inline these methods. I spent a few hours, there are 3/4 cppcon talks trying to address this problem. Most of them end up writing a header-only library that it's usually only supported inc++17. With this I want to say, I didn't find any "elegant" solution for this. I didn't want to use pointers, norsmartpointers, I don't see why we need to allocate oneRobustKernelobject in the heap... the problem is that it's the only way to make this polymorphic. For the time being, I didn't detect any real overhead.Here are 2 examples to investigate further, even the trivial

L2Loss::Weightfunction can not be inlined by the compiler(due to memory allocations in the heap and so on...)

- Polymorphic example: https://godbolt.org/z/1cahrd

- Fixed kernel example: https://godbolt.org/z/5Eofr7, observe how in this case the loss function is trivially inlied by the compiler and further optimized. I can add

inlineto the declarations, but it makes no real difference.PS: I''m open to suggestions if you find a better way to implement this

strategy pattern

Done.

cpp/open3d/pipelines/registration/RobustKernel.cpp, line 46 at r4 (raw file):

Previously, nachovizzo (Ignacio Vizzo) wrote…

Great catch!

Done.

cpp/open3d/pipelines/registration/RobustKernel.cpp, line 63 at r4 (raw file):

Previously, nachovizzo (Ignacio Vizzo) wrote…

Got it, much better

Done.

Yeah of course, "next steps" here definitely mean other PRs :-) |

theNded

left a comment

theNded

left a comment

There was a problem hiding this comment.

Reviewed 1 of 1 files at r5.

Reviewable status:complete! all files reviewed, all discussions resolved (waiting on @yxlao)

|

Clones added

============

- cpp/open3d/pipelines/registration/TransformationEstimation.h 2

See the complete overview on Codacy |

This somehow extends PR isl-org#2425

Ideally one could also add a robust kernel in this step. This extends PR isl-org#2425

* Add weighting function for the per-row version of ComputeJTJandJTr. This somehow extends PR #2425 * Add weight vector to match ComputeJTJandJr new API. Ideally one could also add a robust kernel in this step. This extends PR #2425 * Expose weight parameter for ColorMapOptimizationJacobian * Finish ColorizeICP re-binding in python * Move private function to unnamed namespace * Update python examples * Fix generic->geomtric * Fix tests. After changing how we compute the Jacbonians API * Fix unit tests

This somehow extends PR isl-org#2425

* Add Generalized ICP draft * Add weighting function for the per-row version of ComputeJTJandJTr. This somehow extends PR #2425 * wip * Add weighting function for the per-row version of ComputeJTJandJTr. This somehow extends PR #2425 * Add weight vector to match ComputeJTJandJr new API. Ideally one could also add a robust kernel in this step. This extends PR #2425 * Expose weight parameter for ColorMapOptimizationJacobian * Finish ColorizeICP re-binding in python * Move private function to unnamed namespace * Update python examples * Fix generic->geomtric * Finish draft of the optimization pipeline * Change naming * Fix tests. After changing how we compute the Jacbonians API * Add Python bindings for Genrealized ICP * Add draft for ComputeRMSE * Fix unit tests * Fix GenerelaizedICP draft * Log also correspondence size for each ICP iteration * Fix optimizaiton calculation * Attempt to fix optimization problem * Fix source/target swap. This finally works! * One step closer to gicp * Complete copy-paste from Registartion.cpp No time to deal with API changes, will fix this later * Finish gicp implentation (hopefully) * Turn gicp into an ugly polymorphic code * Use existing normal in the PointCloud instead of re-compute * Fix stupid bug * Add small chec * fix * Another huge bugfix. M -> M-1 * last bugfix of the night * Another bugfix. I can now obtain the same results as the original implemenation * Don't apply incremental rotation to Source Covariance Matrix. On my experiments this only changed a bit the results. And given the fact that we should change the complete API, I guess it makes no sense * Compute source covariance for each iteration. Altough this might look reasonable, it doesn't make sense to re-compute the covariances matrix at each ICP iteration. Although the source cloud is moving... so "it might" change... * Add comments and fix mintor details * Fix style * Update CHANGELOG.md * Fix missing sign. Epsilon must be 0.001 * Add covariance-point information to geometry::PointCloud type This should be replaced in the future with the dictionary-based class. For the moment, this allow us to cleanly update the covariance information, both from the GeneralizedICP method and from the outside world. * Improve the GeneralizedICP initialization step * Unused variables cleanup * Cleanup a bit * Add covariance data member to PointCloud class * Add Variance propagation methods * Propagate Covariance information to VoxelDownSample methods * Update PointCloud pybindings * Reuse CovarianceEstimate code for Normal Computation * Add matrix3dvector pybind type to expose PointCloud::covariances_ * Expose HasCovariances() method to Python API * Expose the PointCloud::EstimateCovariances() method to Python API * Opaque the covariance type * Sort things out * Fix matrix3dvector pybind. Eigen::Matrix3d is not a 16bits aligned type(like Matrix4d). Thus, we can't use Eigen::aligned_allocator<T>, and therefore we have to override the default template parameters. This fix the issue with PointCloud::covariances_ python bindings. Now it's fully working * Start migrating towards the new PointCloud API * Quick implementation of the SVD * Initialize with zeros not with identity * Initialize with zeros not with identity * Remove unused variable * Add unit tests for new covariance member * Covariance Matrix always positive-semi definitive * Covariance Matrix always positive-semi definitive * Simplify * Clear temp covariances buffer for normal estimation. If the user doesn't explicitly request to estimate the covariance member shouldn't be populated * Fix bug * Still not working as expected * Add GeneralizedICP back to build * Add static method to compute covariances. This allows a cleaner EstimateNormals implementation * Make method static * Revert log change * Already checked insided RegistrationICP * Fix some comments * Bring branch closer to master * Happy new year * Expose epsilon parameter for GeneralizedICP * Two changes in this branch * Make it more similar to ColorizedICP * Improve some comments * Fix some docs: * Add some tests * Add missing unit-test (disabled for now) * Improve a bit the comments * Check for covariances instead of normals * Simplify wrapper * Prevent GeneralizedICP for running if no covariance are there * Fix EstimateCovariance logic This static method always requires to resize the output vector of matrices * OpenMP static schedule * It's faster to use the fast-normal-computation rather than SVD * Put back comment * Add simple tutorial * Improve a bit the tutorial * Change titles * Fix notebook style * Fix unit tests This actually works in gcc-9 * remove jupytor example temporarily * add generalized ICP cpp example * support/test generalized icp in reconstruction system Co-authored-by: Wei Dong <weidong@andrew.cmu.edu>

Robust Kernels for Registration in Open3D

thresholdTukeyLossThis PR brings the power of statistical robust kernels to aid the registration pipelines built-in

Open3D. For the time being, it only changes thePointToPlaneICP algorithm but could be easily adapted for other optimization problems.Results

The full explanation on this it's hosted on the tutorial

robust_kernels. The results are shown below:Noisy Input Data

Vanilla ICP

Vanilla ICP + higher distance

thresholdRobust ICP using

TukeyLossAs we show, using a robust kernel(o robust loss function) in the

PointToPlaneoptimization, leads to much better results in the context of noisy data.Kernels Implemented so far

RobustKernel (base class)

Adding a new robust kernel is as easy as inheriting from

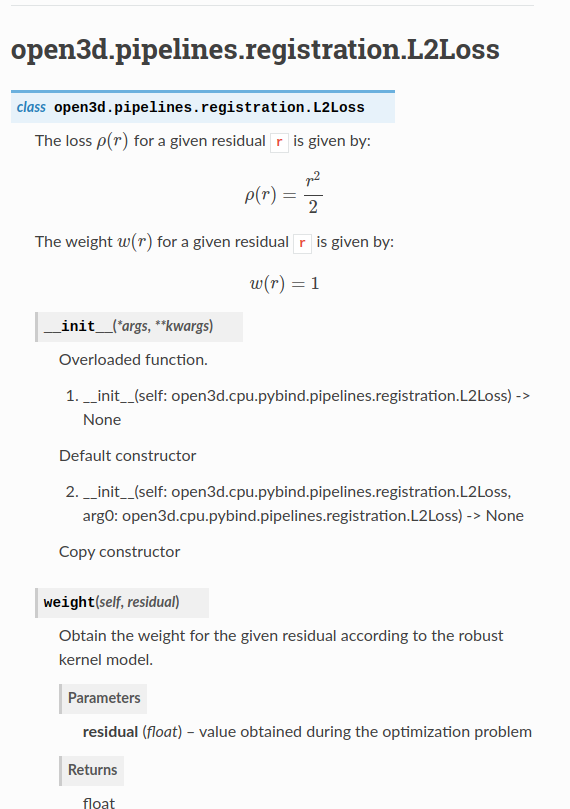

open3d::pipelines::registration::RobustKerneland implemented thevirtual Weight(double residual)method.L2

Is the Vanilla ICP loss function, by default, this is the selected kernel(thus, no real robust optimization). Although this guarantee backward compatibility will all the existing code that relies on

Open3Dregistration ICP pipelines.L1

Huber

Cauchy

German-McClure

Tukey

Documentation

I spent extra time and effort trying to make the documentation the most extensible possible. Please check the tutorial and docs generated by this PR for more information.

More info

For bibliography, you could check out https://arxiv.org/pdf/1810.01474.pdf or https://arxiv.org/pdf/2004.14938.pdf (this adaptive kernel I plane to add soon)

This change is